title stringlengths 1 290 | body stringlengths 0 228k ⌀ | html_url stringlengths 46 51 | comments list | pull_request dict | number int64 1 5.59k | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|

Fix bertscore references | I added some type checking for metrics. There was an issue where a metric could interpret a string a a list. A `ValueError` is raised if a string is given instead of a list.

Moreover I added support for both strings and lists of strings for `references` in `bertscore`, as it is the case in the original code.

Both... | https://github.com/huggingface/datasets/pull/300 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/300",

"html_url": "https://github.com/huggingface/datasets/pull/300",

"diff_url": "https://github.com/huggingface/datasets/pull/300.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/300.patch",

"merged_at": "2020-06-23T14:47:36"... | 300 | true |

remove some print in snli file | This PR removes unwanted `print` statements in some files such as `snli.py` | https://github.com/huggingface/datasets/pull/299 | [

"I guess you can just rebase from master to fix the CI"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/299",

"html_url": "https://github.com/huggingface/datasets/pull/299",

"diff_url": "https://github.com/huggingface/datasets/pull/299.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/299.patch",

"merged_at": "2020-06-23T08:10:44"... | 299 | true |

Add searchable datasets | # Better support for Numpy format + Add Indexed Datasets

I was working on adding Indexed Datasets but in the meantime I had to also add more support for Numpy arrays in the lib.

## Better support for Numpy format

New features:

- New fast method to convert Numpy arrays from Arrow structure (up to x100 speed up... | https://github.com/huggingface/datasets/pull/298 | [

"Looks very cool! Only looked at it superficially though",

"Alright I think I've checked all your comments, thanks :)\r\n\r\nMoreover I just added a way to serialize faiss indexes.\r\nThis is important because for big datasets the index construction can take some time.\r\n\r\nExamples:\r\n\r\n```python\r\nds = nl... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/298",

"html_url": "https://github.com/huggingface/datasets/pull/298",

"diff_url": "https://github.com/huggingface/datasets/pull/298.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/298.patch",

"merged_at": "2020-06-26T07:50:43"... | 298 | true |

Error in Demo for Specific Datasets | Selecting `natural_questions` or `newsroom` dataset in the online demo results in an error similar to the following.

| https://github.com/huggingface/datasets/issues/297 | [

"Thanks for reporting these errors :)\r\n\r\nI can actually see two issues here.\r\n\r\nFirst, datasets like `natural_questions` require apache_beam to be processed. Right now the import is not at the right place so we have this error message. However, even the imports are fixed, the nlp viewer doesn't actually hav... | null | 297 | false |

snli -1 labels | I'm trying to train a model on the SNLI dataset. Why does it have so many -1 labels?

```

import nlp

from collections import Counter

data = nlp.load_dataset('snli')['train']

print(Counter(data['label']))

Counter({0: 183416, 2: 183187, 1: 182764, -1: 785})

```

| https://github.com/huggingface/datasets/issues/296 | [

"@jxmorris12 , we use `-1` to label examples for which `gold label` is missing (`gold label = -` in the original dataset). ",

"Thanks @mariamabarham! so the original dataset is missing some labels? That is weird. Is standard practice just to discard those examples training/eval?",

"Yes the original dataset is... | null | 296 | false |

Improve input warning for evaluation metrics | Hi,

I am the author of `bert_score`. Recently, we received [ an issue ](https://github.com/Tiiiger/bert_score/issues/62) reporting a problem in using `bert_score` from the `nlp` package (also see #238 in this repo). After looking into this, I realized that the problem arises from the format `nlp.Metric` takes inpu... | https://github.com/huggingface/datasets/issues/295 | [] | null | 295 | false |

Cannot load arxiv dataset on MacOS? | I am having trouble loading the `"arxiv"` config from the `"scientific_papers"` dataset on MacOS. When I try loading the dataset with:

```python

arxiv = nlp.load_dataset("scientific_papers", "arxiv")

```

I get the following stack trace:

```bash

JSONDecodeError Traceback (most recen... | https://github.com/huggingface/datasets/issues/294 | [

"I couldn't replicate this issue on my macbook :/\r\nCould you try to play with different encodings in `with open(path, encoding=...) as f` in scientific_papers.py:L108 ?",

"I was able to track down the file causing the problem by adding the following to `scientific_papers.py` (starting at line 116):\r\n\r\n```py... | null | 294 | false |

Don't test community datasets | This PR disables testing for community datasets on aws.

It should fix the CI that is currently failing. | https://github.com/huggingface/datasets/pull/293 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/293",

"html_url": "https://github.com/huggingface/datasets/pull/293",

"diff_url": "https://github.com/huggingface/datasets/pull/293.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/293.patch",

"merged_at": "2020-06-22T11:06:59"... | 293 | true |

Update metadata for x_stance dataset | Thank you for featuring the x_stance dataset in your library. This PR updates some metadata:

- Citation: Replace preprint with proceedings

- URL: Use a URL with long-term availability

| https://github.com/huggingface/datasets/pull/292 | [

"Great! Thanks @jvamvas for these updates.\r\n",

"I have fixed a warning. The remaining test failure is due to an unrelated dataset.",

"We just fixed the other dataset on master. Could you rebase from master and push to rerun the CI ?"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/292",

"html_url": "https://github.com/huggingface/datasets/pull/292",

"diff_url": "https://github.com/huggingface/datasets/pull/292.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/292.patch",

"merged_at": "2020-06-23T08:07:24"... | 292 | true |

break statement not required | https://github.com/huggingface/datasets/pull/291 | [

"I guess,test failing due to connection error?",

"We just fixed the other dataset on master. Could you rebase from master and push to rerun the CI ?",

"If I'm not wrong this function returns None if no main class was found.\r\nI think it makes things less clear not to have a return at the end of the function.\r... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/291",

"html_url": "https://github.com/huggingface/datasets/pull/291",

"diff_url": "https://github.com/huggingface/datasets/pull/291.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/291.patch",

"merged_at": null

} | 291 | true | |

ConnectionError - Eli5 dataset download | Hi, I have a problem with downloading Eli5 dataset. When typing `nlp.load_dataset('eli5')`, I get ConnectionError: Couldn't reach https://storage.googleapis.com/huggingface-nlp/cache/datasets/eli5/LFQA_reddit/1.0.0/explain_like_im_five-train_eli5.arrow

I would appreciate if you could help me with this issue. | https://github.com/huggingface/datasets/issues/290 | [

"It should ne fixed now, thanks for reporting this one :)\r\nIt was an issue on our google storage.\r\n\r\nLet me now if you're still facing this issue.",

"It works now, thanks for prompt help!"

] | null | 290 | false |

update xsum | This PR makes the following update to the xsum dataset:

- Manual download is not required anymore

- dataset can be loaded as follow: `nlp.load_dataset('xsum')`

**Important**

Instead of using on outdated url to download the data: "https://raw.githubusercontent.com/EdinburghNLP/XSum/master/XSum-Dataset/XSum... | https://github.com/huggingface/datasets/pull/289 | [

"Looks cool!\r\n@mariamabarham can you add a detailed description here what exactly is changed and how the user can load xsum now?",

"And a rebase should solve the conflicts",

"This is a super useful PR :-) @sshleifer - maybe you can take a look at the updated version of xsum if you can use it for your use case... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/289",

"html_url": "https://github.com/huggingface/datasets/pull/289",

"diff_url": "https://github.com/huggingface/datasets/pull/289.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/289.patch",

"merged_at": "2020-06-22T07:20:07"... | 289 | true |

Error at the first example in README: AttributeError: module 'dill' has no attribute '_dill' | /Users/parasol_tree/anaconda3/lib/python3.6/site-packages/tensorflow/python/framework/dtypes.py:469: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint8 = np.dtype([("qint8", np.int8, 1)])

/Users/... | https://github.com/huggingface/datasets/issues/288 | [

"It looks like the bug comes from `dill`. Which version of `dill` are you using ?",

"Thank you. It is version 0.2.6, which version is better?",

"0.2.6 is three years old now, maybe try a more recent one, e.g. the current 0.3.2 if you can?",

"Thanks guys! I upgraded dill and it works.",

"Awesome"

] | null | 288 | false |

fix squad_v2 metric | Fix #280

The imports were wrong | https://github.com/huggingface/datasets/pull/287 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/287",

"html_url": "https://github.com/huggingface/datasets/pull/287",

"diff_url": "https://github.com/huggingface/datasets/pull/287.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/287.patch",

"merged_at": "2020-06-19T08:33:41"... | 287 | true |

Add ANLI dataset. | I completed all the steps in https://github.com/huggingface/nlp/blob/master/CONTRIBUTING.md#how-to-add-a-dataset and push the code for ANLI. Please let me know if there are any errors. | https://github.com/huggingface/datasets/pull/286 | [

"Awesome!! Thanks @easonnie.\r\nLet's wait for additional reviews maybe from @lhoestq @patrickvonplaten @jplu"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/286",

"html_url": "https://github.com/huggingface/datasets/pull/286",

"diff_url": "https://github.com/huggingface/datasets/pull/286.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/286.patch",

"merged_at": "2020-06-22T12:23:26"... | 286 | true |

Consistent formatting of citations | #283 | https://github.com/huggingface/datasets/pull/285 | [

"Circle CI shuold be green :-) "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/285",

"html_url": "https://github.com/huggingface/datasets/pull/285",

"diff_url": "https://github.com/huggingface/datasets/pull/285.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/285.patch",

"merged_at": "2020-06-22T08:09:23"... | 285 | true |

Fix manual download instructions | This PR replaces the static `DatasetBulider` variable `MANUAL_DOWNLOAD_INSTRUCTIONS` by a property function `manual_download_instructions()`.

Some datasets like XTREME and all WMT need the manual data dir only for a small fraction of the possible configs.

After some brainstorming with @mariamabarham and @lhoestq... | https://github.com/huggingface/datasets/pull/284 | [

"Verified that this works, thanks!",

"But I get\r\n```python\r\nConnectionError: Couldn't reach https://s3.amazonaws.com/datasets.huggingface.co/nlp/datasets/./datasets/wmt16/wmt16.py\r\n```\r\nWhen I try from jupyter on brutasse or my mac. (the jupyter server is run from transformers).\r\n\r\n\r\nBoth machines c... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/284",

"html_url": "https://github.com/huggingface/datasets/pull/284",

"diff_url": "https://github.com/huggingface/datasets/pull/284.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/284.patch",

"merged_at": "2020-06-19T08:24:19"... | 284 | true |

Consistent formatting of citations | The citations are all of a different format, some have "```" and have text inside, others are proper bibtex.

Can we make it so that they all are proper citations, i.e. parse by the bibtex spec:

https://bibtexparser.readthedocs.io/en/master/ | https://github.com/huggingface/datasets/issues/283 | [] | null | 283 | false |

Update dataset_info from gcs | Some datasets are hosted on gcs (wikipedia for example). In this PR I make sure that, when a user loads such datasets, the file_instructions are built using the dataset_info.json from gcs and not from the info extracted from the local `dataset_infos.json` (the one that contain the info for each config). Indeed local fi... | https://github.com/huggingface/datasets/pull/282 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/282",

"html_url": "https://github.com/huggingface/datasets/pull/282",

"diff_url": "https://github.com/huggingface/datasets/pull/282.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/282.patch",

"merged_at": "2020-06-18T16:24:51"... | 282 | true |

Private/sensitive data | Hi all,

Thanks for this fantastic library, it makes it very easy to do prototyping for NLP projects interchangeably between TF/Pytorch.

Unfortunately, there is data that cannot easily be shared publicly as it may contain sensitive information.

Is there support/a plan to support such data with NLP, e.g. by readin... | https://github.com/huggingface/datasets/issues/281 | [

"Hi @MFreidank, you should already be able to load a dataset from local sources, indeed. (ping @lhoestq and @jplu)\r\n\r\nWe're also thinking about the ability to host private datasets on a hosted bucket with permission management, but that's further down the road.",

"Hi @MFreidank, it is possible to load a datas... | null | 281 | false |

Error with SquadV2 Metrics | I can't seem to import squad v2 metrics.

**squad_metric = nlp.load_metric('squad_v2')**

**This throws me an error.:**

```

ImportError Traceback (most recent call last)

<ipython-input-8-170b6a170555> in <module>

----> 1 squad_metric = nlp.load_metric('squad_v2')

~/env/lib6... | https://github.com/huggingface/datasets/issues/280 | [] | null | 280 | false |

Dataset Preprocessing Cache with .map() function not working as expected | I've been having issues with reproducibility when loading and processing datasets with the `.map` function. I was only able to resolve them by clearing all of the cache files on my system.

Is there a way to disable using the cache when processing a dataset? As I make minor processing changes on the same dataset, I ... | https://github.com/huggingface/datasets/issues/279 | [

"When you're processing a dataset with `.map`, it checks whether it has already done this computation using a hash based on the function and the input (using some fancy serialization with `dill`). If you found that it doesn't work as expected in some cases, let us know !\r\n\r\nGiven that, you can still force to re... | null | 279 | false |

MemoryError when loading German Wikipedia | Hi, first off let me say thank you for all the awesome work you're doing at Hugging Face across all your projects (NLP, Transformers, Tokenizers) - they're all amazing contributions to us working with NLP models :)

I'm trying to download the German Wikipedia dataset as follows:

```

wiki = nlp.load_dataset("wikip... | https://github.com/huggingface/datasets/issues/278 | [

"Hi !\r\n\r\nAs you noticed, \"big\" datasets like Wikipedia require apache beam to be processed.\r\nHowever users usually don't have an apache beam runtime available (spark, dataflow, etc.) so our goal for this library is to also make available processed versions of these datasets, so that users can just download ... | null | 278 | false |

Empty samples in glue/qqp | ```

qqp = nlp.load_dataset('glue', 'qqp')

print(qqp['train'][310121])

print(qqp['train'][362225])

```

```

{'question1': 'How can I create an Android app?', 'question2': '', 'label': 0, 'idx': 310137}

{'question1': 'How can I develop android app?', 'question2': '', 'label': 0, 'idx': 362246}

```

Notice that que... | https://github.com/huggingface/datasets/issues/277 | [

"We are only wrapping the original dataset.\r\n\r\nMaybe try to ask on the GLUE mailing list or reach out to the original authors?",

"Tanks for the suggestion, I'll try to ask GLUE benchmark.\r\nI'll first close the issue, post the following up here afterwards, and reopen the issue if needed. "

] | null | 277 | false |

Fix metric compute (original_instructions missing) | When loading arrow data we added in cc8d250 a way to specify the instructions that were used to store them with the loaded dataset.

However metrics load data the same way but don't need instructions (we use one single file).

In this PR I just make `original_instructions` optional when reading files to load a `Datas... | https://github.com/huggingface/datasets/pull/276 | [

"Awesome! This is working now:\r\n\r\n```python\r\nimport nlp \r\nseqeval = nlp.load_metric(\"seqeval\") \r\ny_true = [['O', 'O', 'O', 'B-MISC', 'I-MISC', 'I-MISC', 'O'], ['B-PER', 'I-PER', 'O']] \r\ny_pred = [['O', 'O', 'B-MISC', 'I-MISC', 'I-MISC', 'I-MISC', 'O'], ['B-PER', 'I-PER', 'O']] ... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/276",

"html_url": "https://github.com/huggingface/datasets/pull/276",

"diff_url": "https://github.com/huggingface/datasets/pull/276.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/276.patch",

"merged_at": "2020-06-18T07:41:43"... | 276 | true |

NonMatchingChecksumError when loading pubmed dataset | I get this error when i run `nlp.load_dataset('scientific_papers', 'pubmed', split = 'train[:50%]')`.

The error is:

```

---------------------------------------------------------------------------

NonMatchingChecksumError Traceback (most recent call last)

<ipython-input-2-7742dea167d0> in <module... | https://github.com/huggingface/datasets/issues/275 | [

"For some reason the files are not available for unauthenticated users right now (like the download service of this package). Instead of downloading the right files, it downloads the html of the error.\r\nAccording to the error it should be back again in 24h.\r\n\r\n, the Allociné dataset must be loaded with :

```python

dataset = load_dataset('allocine', 'allocine')

```

This is redundant, as there is only one "dataset configuration", and should only be:

```python

dataset = load_dataset('allocine')

```

This ... | https://github.com/huggingface/datasets/pull/271 | [

"Actually when there is only one configuration, then you don't need to specify the configuration in `load_dataset`. You can run:\r\n```python\r\ndataset = load_dataset('allocine')\r\n```\r\nand it works.\r\n\r\nMaybe we should take that into account in the nlp viewer @srush ?",

"@lhoestq Just to understand the ex... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/271",

"html_url": "https://github.com/huggingface/datasets/pull/271",

"diff_url": "https://github.com/huggingface/datasets/pull/271.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/271.patch",

"merged_at": null

} | 271 | true |

c4 dataset is not viewable in nlpviewer demo | I get the following error when I try to view the c4 dataset in [nlpviewer](https://huggingface.co/nlp/viewer/)

```python

ModuleNotFoundError: No module named 'langdetect'

Traceback:

File "/home/sasha/.local/lib/python3.7/site-packages/streamlit/ScriptRunner.py", line 322, in _run_script

exec(code, module.__d... | https://github.com/huggingface/datasets/issues/270 | [

"C4 is too large to be shown in the viewer"

] | null | 270 | false |

Error in metric.compute: missing `original_instructions` argument | I'm running into an error using metrics for computation in the latest master as well as version 0.2.1. Here is a minimal example:

```python

import nlp

rte_metric = nlp.load_metric('glue', name="rte")

rte_metric.compute(

[0, 0, 1, 1],

[0, 1, 0, 1],

)

```

```

181 # Read the predictio... | https://github.com/huggingface/datasets/issues/269 | [] | null | 269 | false |

add Rotten Tomatoes Movie Review sentences sentiment dataset | Sentence-level movie reviews v1.0 from here: http://www.cs.cornell.edu/people/pabo/movie-review-data/ | https://github.com/huggingface/datasets/pull/268 | [

"@jplu @thomwolf @patrickvonplaten @lhoestq -- How do I request reviewers? Thanks."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/268",

"html_url": "https://github.com/huggingface/datasets/pull/268",

"diff_url": "https://github.com/huggingface/datasets/pull/268.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/268.patch",

"merged_at": "2020-06-18T07:46:23"... | 268 | true |

How can I load/find WMT en-romanian? | I believe it is from `wmt16`

When I run

```python

wmt = nlp.load_dataset('wmt16')

```

I get:

```python

AssertionError: The dataset wmt16 with config cs-en requires manual data.

Please follow the manual download instructions: Some of the wmt configs here, require a manual download.

Please look into wm... | https://github.com/huggingface/datasets/issues/267 | [

"I will take a look :-) "

] | null | 267 | false |

Add sort, shuffle, test_train_split and select methods | Add a bunch of methods to reorder/split/select rows in a dataset:

- `dataset.select(indices)`: Create a new dataset with rows selected following the list/array of indices (which can have a different size than the dataset and contain duplicated indices, the only constrain is that all the integers in the list must be sm... | https://github.com/huggingface/datasets/pull/266 | [

"Nice !\r\n\r\nAlso it looks like we can have a train_test_split method for free:\r\n```python\r\ntrain_indices, test_indices = train_test_split(range(len(dataset)))\r\ntrain = dataset.sort(indices=train_indices)\r\ntest = dataset.sort(indices=test_indices)\r\n```\r\n\r\nand a shuffling method for free:\r\n```pytho... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/266",

"html_url": "https://github.com/huggingface/datasets/pull/266",

"diff_url": "https://github.com/huggingface/datasets/pull/266.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/266.patch",

"merged_at": "2020-06-18T16:23:23"... | 266 | true |

Add pyarrow warning colab | When a user installs `nlp` on google colab, then google colab doesn't update pyarrow, and the runtime needs to be restarted to use the updated version of pyarrow.

This is an issue because `nlp` requires the updated version to work correctly.

In this PR I added en error that is shown to the user in google colab if... | https://github.com/huggingface/datasets/pull/265 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/265",

"html_url": "https://github.com/huggingface/datasets/pull/265",

"diff_url": "https://github.com/huggingface/datasets/pull/265.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/265.patch",

"merged_at": "2020-06-12T08:14:16"... | 265 | true |

Fix small issues creating dataset | Fix many small issues mentioned in #249:

- don't force to install apache beam for commands

- fix None cache dir when using `dl_manager.download_custom`

- added new extras in `setup.py` named `dev` that contains tests and quality dependencies

- mock dataset sizes when running tests with dummy data

- add a note abou... | https://github.com/huggingface/datasets/pull/264 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/264",

"html_url": "https://github.com/huggingface/datasets/pull/264",

"diff_url": "https://github.com/huggingface/datasets/pull/264.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/264.patch",

"merged_at": "2020-06-12T08:15:56"... | 264 | true |

[Feature request] Support for external modality for language datasets | # Background

In recent years many researchers have advocated that learning meanings from text-based only datasets is just like asking a human to "learn to speak by listening to the radio" [[E. Bender and A. Koller,2020](https://openreview.net/forum?id=GKTvAcb12b), [Y. Bisk et. al, 2020](https://arxiv.org/abs/2004.10... | https://github.com/huggingface/datasets/issues/263 | [

"Thanks a lot, @aleSuglia for the very detailed and introductive feature request.\r\nIt seems like we could build something pretty useful here indeed.\r\n\r\nOne of the questions here is that Arrow doesn't have built-in support for generic \"tensors\" in records but there might be ways to do that in a clean way. We... | null | 263 | false |

Add new dataset ANLI Round 1 | Adding new dataset [ANLI](https://github.com/facebookresearch/anli/).

I'm not familiar with how to add new dataset. Let me know if there is any issue. I only include round 1 data here. There will be round 2, round 3 and more in the future with potentially different format. I think it will be better to separate them. | https://github.com/huggingface/datasets/pull/262 | [

"Hello ! Thanks for adding this one :)\r\n\r\nThis looks great, you just have to do the last steps to make the CI pass.\r\nI can see that two things are missing:\r\n1. the dummy data that is used to test that the script is working as expected\r\n2. the json file with all the infos about the dataset\r\n\r\nYou can s... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/262",

"html_url": "https://github.com/huggingface/datasets/pull/262",

"diff_url": "https://github.com/huggingface/datasets/pull/262.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/262.patch",

"merged_at": null

} | 262 | true |

Downloading dataset error with pyarrow.lib.RecordBatch | I am trying to download `sentiment140` and I have the following error

```

/usr/local/lib/python3.6/dist-packages/nlp/load.py in load_dataset(path, name, version, data_dir, data_files, split, cache_dir, download_config, download_mode, ignore_verifications, save_infos, **config_kwargs)

518 download_mode=... | https://github.com/huggingface/datasets/issues/261 | [

"When you install `nlp` for the first time on a Colab runtime, it updates the `pyarrow` library that was already on colab. This update shows this message on colab:\r\n```\r\nWARNING: The following packages were previously imported in this runtime:\r\n [pyarrow]\r\nYou must restart the runtime in order to use newly... | null | 261 | false |

Consistency fixes | A few bugs I've found while hacking | https://github.com/huggingface/datasets/pull/260 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/260",

"html_url": "https://github.com/huggingface/datasets/pull/260",

"diff_url": "https://github.com/huggingface/datasets/pull/260.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/260.patch",

"merged_at": "2020-06-11T10:34:36"... | 260 | true |

documentation missing how to split a dataset | I am trying to understand how to split a dataset ( as arrow_dataset).

I know I can do something like this to access a split which is already in the original dataset :

`ds_test = nlp.load_dataset('imdb, split='test') `

But how can I split ds_test into a test and a validation set (without reading the data into m... | https://github.com/huggingface/datasets/issues/259 | [

"this seems to work for my specific problem:\r\n\r\n`self.train_ds, self.test_ds, self.val_ds = map(_prepare_ds, ('train', 'test[:25%]+test[50%:75%]', 'test[75%:]'))`",

"Currently you can indeed split a dataset using `ds_test = nlp.load_dataset('imdb, split='test[:5000]')` (works also with percentages).\r\n\r\nHo... | null | 259 | false |

Why is dataset after tokenization far more larger than the orginal one ? | I tokenize wiki dataset by `map` and cache the results.

```

def tokenize_tfm(example):

example['input_ids'] = hf_fast_tokenizer.convert_tokens_to_ids(hf_fast_tokenizer.tokenize(example['text']))

return example

wiki = nlp.load_dataset('wikipedia', '20200501.en', cache_dir=cache_dir)['train']

wiki.map(token... | https://github.com/huggingface/datasets/issues/258 | [

"Hi ! This is because `.map` added the new column `input_ids` to the dataset, and so all the other columns were kept. Therefore the dataset size increased a lot.\r\n If you want to only keep the `input_ids` column, you can stash the other ones by specifying `remove_columns=[\"title\", \"text\"]` in the arguments of... | null | 258 | false |

Tokenizer pickling issue fix not landed in `nlp` yet? | Unless I recreate an arrow_dataset from my loaded nlp dataset myself (which I think does not use the cache by default), I get the following error when applying the map function:

```

dataset = nlp.load_dataset('cos_e')

tokenizer = GPT2TokenizerFast.from_pretrained('gpt2', cache_dir=cache_dir)

for split in datase... | https://github.com/huggingface/datasets/issues/257 | [

"Yes, the new release of tokenizers solves this and should be out soon.\r\nIn the meantime, you can install it with `pip install tokenizers==0.8.0-dev2`",

"If others run into this issue, a quick fix is to use python 3.6 instead of 3.7+. Serialization differences between the 3rd party `dataclasses` package for 3.6... | null | 257 | false |

[Feature request] Add a feature to dataset | Is there a straightforward way to add a field to the arrow_dataset, prior to performing map? | https://github.com/huggingface/datasets/issues/256 | [

"Do you have an example of what you would like to do? (you can just add a field in the output of the unction you give to map and this will add this field in the output table)",

"Given another source of data loaded in, I want to pre-add it to the dataset so that it aligns with the indices of the arrow dataset prio... | null | 256 | false |

Add dataset/piaf | Small SQuAD-like French QA dataset [PIAF](https://www.aclweb.org/anthology/2020.lrec-1.673.pdf) | https://github.com/huggingface/datasets/pull/255 | [

"Very nice !"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/255",

"html_url": "https://github.com/huggingface/datasets/pull/255",

"diff_url": "https://github.com/huggingface/datasets/pull/255.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/255.patch",

"merged_at": "2020-06-12T08:31:27"... | 255 | true |

[Feature request] Be able to remove a specific sample of the dataset | As mentioned in #117, it's currently not possible to remove a sample of the dataset.

But it is a important use case : After applying some preprocessing, some samples might be empty for example. We should be able to remove these samples from the dataset, or at least mark them as `removed` so when iterating the datase... | https://github.com/huggingface/datasets/issues/254 | [

"Oh yes you can now do that with the `dataset.filter()` method that was added in #214 "

] | null | 254 | false |

add flue dataset | This PR add the Flue dataset as requested in this issue #223 . @lbourdois made a detailed description in that issue.

| https://github.com/huggingface/datasets/pull/253 | [

"The dummy data file was wrong. I only fixed it for the book config. Even though the tests are all green here, this should also be fixed for all other configs. Could you take a look there @mariamabarham ? ",

"Hi @mariamabarham \r\n\r\nFLUE can indeed become a very interesting benchmark for french NLP !\r\nUnfortu... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/253",

"html_url": "https://github.com/huggingface/datasets/pull/253",

"diff_url": "https://github.com/huggingface/datasets/pull/253.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/253.patch",

"merged_at": null

} | 253 | true |

NonMatchingSplitsSizesError error when reading the IMDB dataset | Hi!

I am trying to load the `imdb` dataset with this line:

`dataset = nlp.load_dataset('imdb', data_dir='/A/PATH', cache_dir='/A/PATH')`

but I am getting the following error:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/mounts/Users/cisintern/antmarakis/anaconda3/... | https://github.com/huggingface/datasets/issues/252 | [

"I just tried on my side and I didn't encounter your problem.\r\nApparently the script doesn't generate all the examples on your side.\r\n\r\nCan you provide the version of `nlp` you're using ?\r\nCan you try to clear your cache and re-run the code ?",

"I updated it, that was it, thanks!",

"Hello, I am facing t... | null | 252 | false |

Better access to all dataset information | Moves all the dataset info down one level from `dataset.info.XXX` to `dataset.XXX`

This way it's easier to access `dataset.feature['label']` for instance

Also, add the original split instructions used to create the dataset in `dataset.split`

Ex:

```

from nlp import load_dataset

stsb = load_dataset('glue', name=... | https://github.com/huggingface/datasets/pull/251 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/251",

"html_url": "https://github.com/huggingface/datasets/pull/251",

"diff_url": "https://github.com/huggingface/datasets/pull/251.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/251.patch",

"merged_at": "2020-06-12T08:12:58"... | 251 | true |

Remove checksum download in c4 | There was a line from the original tfds script that was still there and causing issues when loading the c4 script. This one should fix #233 and allow anyone to load the c4 script to generate the dataset | https://github.com/huggingface/datasets/pull/250 | [

"Commenting again in case [previous thread](https://github.com/huggingface/nlp/pull/233) was inactive.\r\n\r\n@lhoestq I am facing `IsADirectoryError` while downloading with this command.\r\nCan you pls look into it & help me.\r\nI'm using version 0.4.0 of `nlp`.\r\n\r\n```\r\ndataset = load_dataset(\"c4\", 'en', d... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/250",

"html_url": "https://github.com/huggingface/datasets/pull/250",

"diff_url": "https://github.com/huggingface/datasets/pull/250.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/250.patch",

"merged_at": "2020-06-08T09:16:59"... | 250 | true |

[Dataset created] some critical small issues when I was creating a dataset | Hi, I successfully created a dataset and has made a pr #248.

But I have encountered several problems when I was creating it, and those should be easy to fix.

1. Not found dataset_info.json

should be fixed by #241 , eager to wait it be merged.

2. Forced to install `apach_beam`

If we should install it, then it m... | https://github.com/huggingface/datasets/issues/249 | [

"Thanks for noticing all these :) They should be easy to fix indeed",

"Alright I think I fixed all the problems you mentioned. Thanks again, that will be useful for many people.\r\nThere is still more work needed for point 7. but we plan to have some nice docs soon."

] | null | 249 | false |

add Toronto BooksCorpus | 1. I knew there is a branch `toronto_books_corpus`

- After I downloaded it, I found it is all non-english, and only have one row.

- It seems that it cites the wrong paper

- according to papar using it, it is called `BooksCorpus` but not `TornotoBooksCorpus`

2. It use a text mirror in google drive

- `bookscorpu... | https://github.com/huggingface/datasets/pull/248 | [

"Thanks for adding this one !\r\n\r\nAbout the three points you mentioned:\r\n1. I think the `toronto_books_corpus` branch can be removed @mariamabarham ? \r\n2. You can use the download manager to download from google drive. For you case you can just do something like \r\n```python\r\nURL = \"https://drive.google.... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/248",

"html_url": "https://github.com/huggingface/datasets/pull/248",

"diff_url": "https://github.com/huggingface/datasets/pull/248.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/248.patch",

"merged_at": "2020-06-12T08:45:02"... | 248 | true |

Make all dataset downloads deterministic by applying `sorted` to glob and os.listdir | This PR makes all datasets loading deterministic by applying `sorted()` to all `glob.glob` and `os.listdir` statements.

Are there other "non-deterministic" functions apart from `glob.glob()` and `os.listdir()` that you can think of @thomwolf @lhoestq @mariamabarham @jplu ?

**Important**

It does break backward c... | https://github.com/huggingface/datasets/pull/247 | [

"That's great!\r\n\r\nI think it would be nice to test \"deterministic-ness\" of datasets in CI if we can do it (should be left for future PR of course)\r\n\r\nHere is a possibility (maybe there are other ways to do it): given that we may soon have efficient and large-scale hashing (cf our discussion on versioning/... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/247",

"html_url": "https://github.com/huggingface/datasets/pull/247",

"diff_url": "https://github.com/huggingface/datasets/pull/247.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/247.patch",

"merged_at": "2020-06-08T09:18:14"... | 247 | true |

What is the best way to cache a dataset? | For example if I want to use streamlit with a nlp dataset:

```

@st.cache

def load_data():

return nlp.load_dataset('squad')

```

This code raises the error "uncachable object"

Right now I just fixed with a constant for my specific case:

```

@st.cache(hash_funcs={pyarrow.lib.Buffer: lambda b: 0})

```... | https://github.com/huggingface/datasets/issues/246 | [

"Everything is already cached by default in 🤗nlp (in particular dataset\nloading and all the “map()” operations) so I don’t think you need to do any\nspecific caching in streamlit.\n\nTell us if you feel like it’s not the case.\n\nOn Sat, 6 Jun 2020 at 13:02, Fabrizio Milo <notifications@github.com> wrote:\n\n> Fo... | null | 246 | false |

SST-2 test labels are all -1 | I'm trying to test a model on the SST-2 task, but all the labels I see in the test set are -1.

```

>>> import nlp

>>> glue = nlp.load_dataset('glue', 'sst2')

>>> glue

{'train': Dataset(schema: {'sentence': 'string', 'label': 'int64', 'idx': 'int32'}, num_rows: 67349), 'validation': Dataset(schema: {'sentence': 'st... | https://github.com/huggingface/datasets/issues/245 | [

"this also happened to me with `nlp.load_dataset('glue', 'mnli')`",

"Yes, this is because the test sets for glue are hidden so the labels are\nnot publicly available. You can read the glue paper for more details.\n\nOn Sat, 6 Jun 2020 at 18:16, Jack Morris <notifications@github.com> wrote:\n\n> this also happened... | null | 245 | false |

Add Allociné Dataset | This is a french binary sentiment classification dataset, which was used to train this model: https://huggingface.co/tblard/tf-allocine.

Basically, it's a french "IMDB" dataset, with more reviews.

More info on [this repo](https://github.com/TheophileBlard/french-sentiment-analysis-with-bert). | https://github.com/huggingface/datasets/pull/244 | [

"great work @TheophileBlard ",

"LGTM, thanks a lot for adding dummy data tests :-) Was it difficult to create the correct dummy data folder? ",

"It was pretty easy actually. Documentation is on point !"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/244",

"html_url": "https://github.com/huggingface/datasets/pull/244",

"diff_url": "https://github.com/huggingface/datasets/pull/244.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/244.patch",

"merged_at": "2020-06-11T07:47:26"... | 244 | true |

Specify utf-8 encoding for GLUE | #242

This makes the GLUE-MNLI dataset readable on my machine, not sure if it's a Windows-only bug. | https://github.com/huggingface/datasets/pull/243 | [

"Thanks for fixing the encoding :)"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/243",

"html_url": "https://github.com/huggingface/datasets/pull/243",

"diff_url": "https://github.com/huggingface/datasets/pull/243.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/243.patch",

"merged_at": "2020-06-08T08:42:01"... | 243 | true |

UnicodeDecodeError when downloading GLUE-MNLI | When I run

```python

dataset = nlp.load_dataset('glue', 'mnli')

```

I get an encoding error (could it be because I'm using Windows?) :

```python

# Lots of error log lines later...

~\Miniconda3\envs\nlp\lib\site-packages\tqdm\std.py in __iter__(self)

1128 try:

-> 1129 for obj in iterable:... | https://github.com/huggingface/datasets/issues/242 | [

"It should be good now, thanks for noticing and fixing it ! I would say that it was because you are on windows but not 100% sure",

"On Windows Python supports Unicode almost everywhere, but one of the notable exceptions is open() where it uses the locale encoding schema. So platform independent python scripts wou... | null | 242 | false |

Fix empty cache dir | If the cache dir of a dataset is empty, the dataset fails to load and throws a FileNotFounfError. We could end up with empty cache dir because there was a line in the code that created the cache dir without using a temp dir. Using a temp dir is useful as it gets renamed to the real cache dir only if the full process is... | https://github.com/huggingface/datasets/pull/241 | [

"Looks great! Will this change force all cached datasets to be redownloaded? But even if it does, it shoud not be a big problem, I think",

"> Looks great! Will this change force all cached datasets to be redownloaded? But even if it does, it shoud not be a big problem, I think\r\n\r\nNo it shouldn't force to redo... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/241",

"html_url": "https://github.com/huggingface/datasets/pull/241",

"diff_url": "https://github.com/huggingface/datasets/pull/241.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/241.patch",

"merged_at": "2020-06-08T08:35:31"... | 241 | true |

Deterministic dataset loading | When calling:

```python

import nlp

dataset = nlp.load_dataset("trivia_qa", split="validation[:1%]")

```

the resulting dataset is not deterministic over different google colabs.

After talking to @thomwolf, I suspect the reason to be the use of `glob.glob` in line:

https://github.com/huggingface/nlp/blob/2e0... | https://github.com/huggingface/datasets/issues/240 | [

"Yes good point !",

"I think using `sorted(glob.glob())` would actually solve this problem. Can you think of other reasons why dataset loading might not be deterministic? @mariamabarham @yjernite @lhoestq @thomwolf . \r\n\r\nI can do a sweep through the dataset scripts and fix the glob.glob() if you guys are ok w... | null | 240 | false |

[Creating new dataset] Not found dataset_info.json | Hi, I am trying to create Toronto Book Corpus. #131

I ran

`~/nlp % python nlp-cli test datasets/bookcorpus --save_infos --all_configs`

but this doesn't create `dataset_info.json` and try to use it

```

INFO:nlp.load:Checking datasets/bookcorpus/bookcorpus.py for additional imports.

INFO:filelock:Lock 1397953257... | https://github.com/huggingface/datasets/issues/239 | [

"I think you can just `rm` this directory and it should be good :)",

"@lhoestq - this seems to happen quite often (already the 2nd issue). Can we maybe delete this automatically?",

"Yes I have an idea of what's going on. I'm sure I can fix that",

"Hi, I rebase my local copy to `fix-empty-cache-dir`, and try t... | null | 239 | false |

[Metric] Bertscore : Warning : Empty candidate sentence; Setting recall to be 0. | When running BERT-Score, I'm meeting this warning :

> Warning: Empty candidate sentence; Setting recall to be 0.

Code :

```

import nlp

metric = nlp.load_metric("bertscore")

scores = metric.compute(["swag", "swags"], ["swags", "totally something different"], lang="en", device=0)

```

---

**What am I do... | https://github.com/huggingface/datasets/issues/238 | [

"This print statement comes from the official implementation of bert_score (see [here](https://github.com/Tiiiger/bert_score/blob/master/bert_score/utils.py#L343)). The warning shows up only if the attention mask outputs no candidate.\r\nRight now we want to only use official code for metrics to have fair evaluatio... | null | 238 | false |

Can't download MultiNLI | When I try to download MultiNLI with

```python

dataset = load_dataset('multi_nli')

```

I get this long error:

```python

---------------------------------------------------------------------------

OSError Traceback (most recent call last)

<ipython-input-13-3b11f6be4cb9> in <m... | https://github.com/huggingface/datasets/issues/237 | [

"You should use `load_dataset('glue', 'mnli')`",

"Thanks! I thought I had to use the same code displayed in the live viewer:\r\n```python\r\n!pip install nlp\r\nfrom nlp import load_dataset\r\ndataset = load_dataset('multi_nli', 'plain_text')\r\n```\r\nYour suggestion works, even if then I got a different issue (... | null | 237 | false |

CompGuessWhat?! dataset | Hello,

Thanks for the amazing library that you put together. I'm Alessandro Suglia, the first author of CompGuessWhat?!, a recently released dataset for grounded language learning accepted to ACL 2020 ([https://compguesswhat.github.io](https://compguesswhat.github.io)).

This pull-request adds the CompGuessWhat?! ... | https://github.com/huggingface/datasets/pull/236 | [

"Hi @aleSuglia, thanks for this great PR. Indeed you can have both datasets in one file. You need to add a config class which will allows you to specify the different subdataset names and then you will be able to load them as follow.\r\nnlp.load_dataset(\"compguesswhat\", \"compguesswhat-gameplay\") \r\nnlp.load_d... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/236",

"html_url": "https://github.com/huggingface/datasets/pull/236",

"diff_url": "https://github.com/huggingface/datasets/pull/236.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/236.patch",

"merged_at": "2020-06-11T07:45:21"... | 236 | true |

Add experimental datasets | ## Adding an *experimental datasets* folder

After using the 🤗nlp library for some time, I find that while it makes it super easy to create new memory-mapped datasets with lots of cool utilities, a lot of what I want to do doesn't work well with the current `MockDownloader` based testing paradigm, making it hard to ... | https://github.com/huggingface/datasets/pull/235 | [

"I think it would be nicer to not create a new folder `datasets_experimental` , but just put your datasets also into the folder `datasets` for the following reasons:\r\n\r\n- From my point of view, the datasets are not very different from the other datasets (assuming that we soon have C4, and the beam datasets) so ... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/235",

"html_url": "https://github.com/huggingface/datasets/pull/235",

"diff_url": "https://github.com/huggingface/datasets/pull/235.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/235.patch",

"merged_at": "2020-06-12T15:38:55"... | 235 | true |

Huggingface NLP, Uploading custom dataset | Hello,

Does anyone know how we can call our custom dataset using the nlp.load command? Let's say that I have a dataset based on the same format as that of squad-v1.1, how am I supposed to load it using huggingface nlp.

Thank you! | https://github.com/huggingface/datasets/issues/234 | [

"What do you mean 'custom' ? You may want to elaborate on it when ask a question.\r\n\r\nAnyway, there are two things you may interested\r\n`nlp.Dataset.from_file` and `load_dataset(..., cache_dir=)`",

"To load a dataset you need to have a script that defines the format of the examples, the splits and the way to ... | null | 234 | false |

Fail to download c4 english corpus | i run following code to download c4 English corpus.

```

dataset = nlp.load_dataset('c4', 'en', beam_runner='DirectRunner'

, data_dir='/mypath')

```

and i met failure as follows

```

Downloading and preparing dataset c4/en (download: Unknown size, generated: Unknown size, total: Unknown size) to /home/adam/.... | https://github.com/huggingface/datasets/issues/233 | [

"Hello ! Thanks for noticing this bug, let me fix that.\r\n\r\nAlso for information, as specified in the changelog of the latest release, C4 currently needs to have a runtime for apache beam to work on. Apache beam is used to process this very big dataset and it can work on dataflow, spark, flink, apex, etc. You ca... | null | 233 | false |

Nlp cli fix endpoints | With this PR users will be able to upload their own datasets and metrics.

As mentioned in #181, I had to use the new endpoints and revert the use of dataclasses (just in case we have changes in the API in the future).

We now distinguish commands for datasets and commands for metrics:

```bash

nlp-cli upload_data... | https://github.com/huggingface/datasets/pull/232 | [

"LGTM 👍 "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/232",

"html_url": "https://github.com/huggingface/datasets/pull/232",

"diff_url": "https://github.com/huggingface/datasets/pull/232.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/232.patch",

"merged_at": "2020-06-08T09:02:57"... | 232 | true |

Add .download to MockDownloadManager | One method from the DownloadManager was missing and some users couldn't run the tests because of that.

@yjernite | https://github.com/huggingface/datasets/pull/231 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/231",

"html_url": "https://github.com/huggingface/datasets/pull/231",

"diff_url": "https://github.com/huggingface/datasets/pull/231.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/231.patch",

"merged_at": "2020-06-03T14:25:54"... | 231 | true |

Don't force to install apache beam for wikipedia dataset | As pointed out in #227, we shouldn't force users to install apache beam if the processed dataset can be downloaded. I moved the imports of some datasets to avoid this problem | https://github.com/huggingface/datasets/pull/230 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/230",

"html_url": "https://github.com/huggingface/datasets/pull/230",

"diff_url": "https://github.com/huggingface/datasets/pull/230.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/230.patch",

"merged_at": "2020-06-03T14:34:07"... | 230 | true |

Rename dataset_infos.json to dataset_info.json | As the file required for the viewing in the live nlp viewer is named as dataset_info.json | https://github.com/huggingface/datasets/pull/229 | [

"\r\nThis was actually the right name. `dataset_infos.json` is used to have the infos of all the dataset configurations.\r\n\r\nOn the other hand `dataset_info.json` (without 's') is a cache file with the info of one specific configuration.\r\n\r\nTo fix #228, we probably just have to clear and reload the nlp-viewe... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/229",

"html_url": "https://github.com/huggingface/datasets/pull/229",

"diff_url": "https://github.com/huggingface/datasets/pull/229.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/229.patch",

"merged_at": null

} | 229 | true |

Not able to access the XNLI dataset | When I try to access the XNLI dataset, I get the following error. The option of plain_text get selected automatically and then I get the following error.

```

FileNotFoundError: [Errno 2] No such file or directory: '/home/sasha/.cache/huggingface/datasets/xnli/plain_text/1.0.0/dataset_info.json'

Traceback:

File "/... | https://github.com/huggingface/datasets/issues/228 | [

"Added pull request to change the name of the file from dataset_infos.json to dataset_info.json",

"Thanks for reporting this bug !\r\nAs it seems to be just a cache problem, I closed your PR.\r\nI think we might just need to clear and reload the `xnli` cache @srush ? ",

"Update: The dataset_info.json error is g... | null | 228 | false |

Should we still have to force to install apache_beam to download wikipedia ? | Hi, first thanks to @lhoestq 's revolutionary work, I successfully downloaded processed wikipedia according to the doc. 😍😍😍

But at the first try, it tell me to install `apache_beam` and `mwparserfromhell`, which I thought wouldn't be used according to #204 , it was kind of confusing me at that time.

Maybe we s... | https://github.com/huggingface/datasets/issues/227 | [

"Thanks for your message 😊 \r\nIndeed users shouldn't have to install those dependencies",

"Got it, feel free to close this issue when you think it’s resolved.",

"It should be good now :)"

] | null | 227 | false |

add BlendedSkillTalk dataset | This PR add the BlendedSkillTalk dataset, which is used to fine tune the blenderbot. | https://github.com/huggingface/datasets/pull/226 | [

"Awesome :D"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/226",

"html_url": "https://github.com/huggingface/datasets/pull/226",

"diff_url": "https://github.com/huggingface/datasets/pull/226.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/226.patch",

"merged_at": "2020-06-03T14:37:22"... | 226 | true |

[ROUGE] Different scores with `files2rouge` | It seems that the ROUGE score of `nlp` is lower than the one of `files2rouge`.

Here is a self-contained notebook to reproduce both scores : https://colab.research.google.com/drive/14EyAXValB6UzKY9x4rs_T3pyL7alpw_F?usp=sharing

---

`nlp` : (Only mid F-scores)

>rouge1 0.33508031962733364

rouge2 0.145743337761... | https://github.com/huggingface/datasets/issues/225 | [

"@Colanim unfortunately there are different implementations of the ROUGE metric floating around online which yield different results, and we had to chose one for the package :) We ended up including the one from the google-research repository, which does minimal post-processing before computing the P/R/F scores. If... | null | 225 | false |

[Feature Request/Help] BLEURT model -> PyTorch | Hi, I am interested in porting google research's new BLEURT learned metric to PyTorch (because I wish to do something experimental with language generation and backpropping through BLEURT). I noticed that you guys don't have it yet so I am partly just asking if you plan to add it (@thomwolf said you want to do so on Tw... | https://github.com/huggingface/datasets/issues/224 | [

"Is there any update on this? \r\n\r\nThanks!",

"Hitting this error when using bleurt with PyTorch ...\r\n\r\n```\r\nUnrecognizedFlagError: Unknown command line flag 'f'\r\n```\r\n... and I'm assuming because it was built for TF specifically. Is there a way to use this metric in PyTorch?",

"We currently provid... | null | 224 | false |

[Feature request] Add FLUE dataset | Hi,

I think it would be interesting to add the FLUE dataset for francophones or anyone wishing to work on French.

In other requests, I read that you are already working on some datasets, and I was wondering if FLUE was planned.

If it is not the case, I can provide each of the cleaned FLUE datasets (in the form... | https://github.com/huggingface/datasets/issues/223 | [

"Hi @lbourdois, yes please share it with us",

"@mariamabarham \r\nI put all the datasets on this drive: https://1drv.ms/u/s!Ao2Rcpiny7RFinDypq7w-LbXcsx9?e=iVsEDh\r\n\r\n\r\nSome information : \r\n• For FLUE, the quote used is\r\n\r\n> @misc{le2019flaubert,\r\n> title={FlauBERT: Unsupervised Language Model Pre... | null | 223 | false |

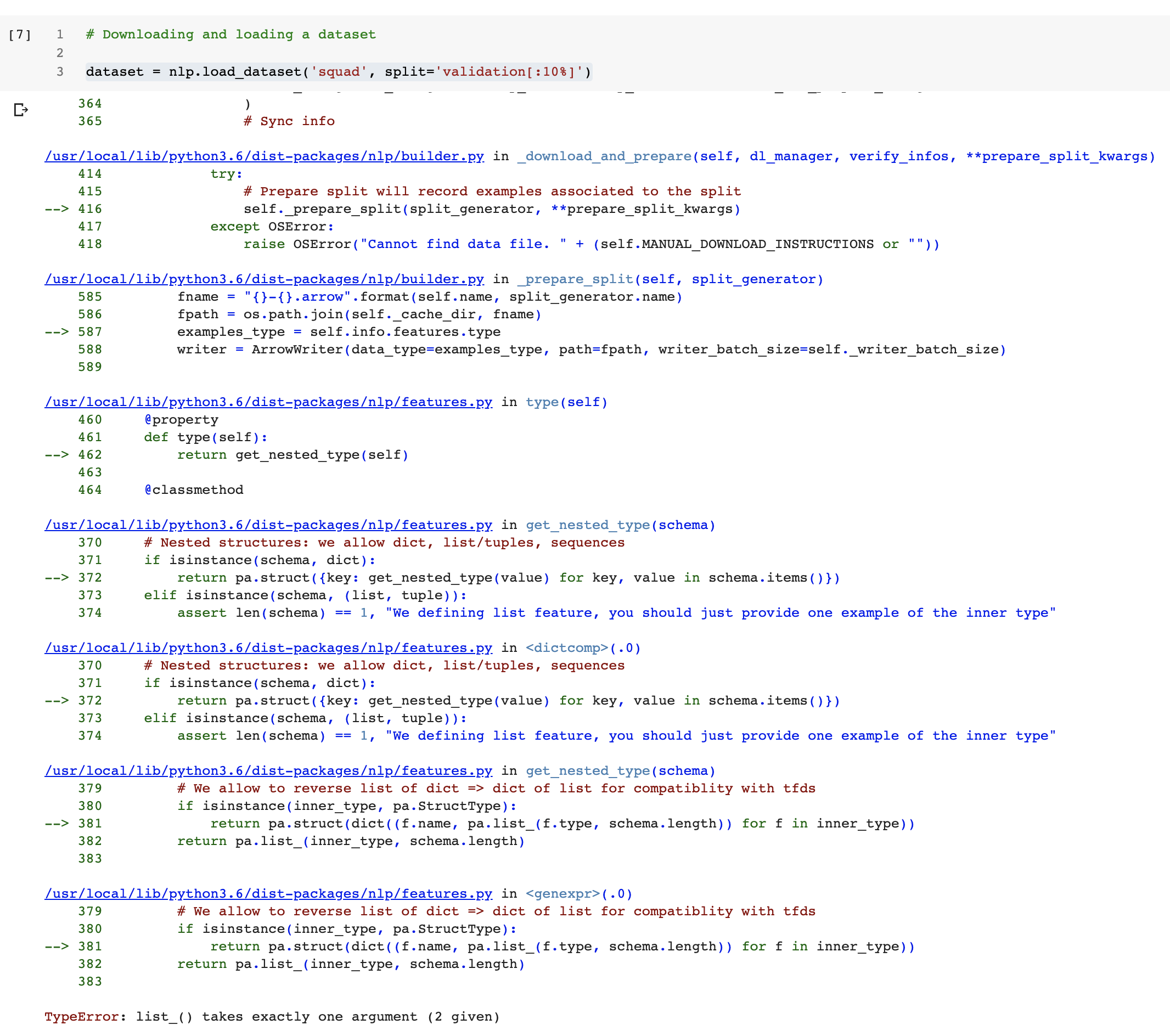

Colab Notebook breaks when downloading the squad dataset | When I run the notebook in Colab

https://colab.research.google.com/github/huggingface/nlp/blob/master/notebooks/Overview.ipynb

breaks when running this cell:

| https://github.com/huggingface/datasets/issues/222 | [

"The notebook forces version 0.1.0. If I use the latest, things work, I'll run the whole notebook and create a PR.\r\n\r\nBut in the meantime, this issue gets fixed by changing:\r\n`!pip install nlp==0.1.0`\r\nto\r\n`!pip install nlp`",

"It still breaks very near the end\r\n\r\n\r\n\r\nTo fix the CI you can do:\r\n1 - rebase from master\r\n2 - then run `make style` as specified in [CONTRIBUTING.md](https://github.com/huggingface/nlp/blob/master/CONTRIBUTING.md) ?"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/221",

"html_url": "https://github.com/huggingface/datasets/pull/221",

"diff_url": "https://github.com/huggingface/datasets/pull/221.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/221.patch",

"merged_at": "2020-05-29T15:02:23"... | 221 | true |

dataset_arcd | Added Arabic Reading Comprehension Dataset (ARCD): https://arxiv.org/abs/1906.05394 | https://github.com/huggingface/datasets/pull/220 | [

"you can rebase from master to fix the CI error :)",

"Awesome !"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/220",

"html_url": "https://github.com/huggingface/datasets/pull/220",

"diff_url": "https://github.com/huggingface/datasets/pull/220.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/220.patch",

"merged_at": "2020-05-29T14:57:21"... | 220 | true |

force mwparserfromhell as third party | This should fix your env because you had `mwparserfromhell ` as a first party for `isort` @patrickvonplaten | https://github.com/huggingface/datasets/pull/219 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/219",

"html_url": "https://github.com/huggingface/datasets/pull/219",

"diff_url": "https://github.com/huggingface/datasets/pull/219.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/219.patch",

"merged_at": "2020-05-29T13:30:12"... | 219 | true |

Add Natual Questions and C4 scripts | Scripts are ready !

However they are not processed nor directly available from gcp yet. | https://github.com/huggingface/datasets/pull/218 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/218",

"html_url": "https://github.com/huggingface/datasets/pull/218",

"diff_url": "https://github.com/huggingface/datasets/pull/218.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/218.patch",

"merged_at": "2020-05-29T12:31:00"... | 218 | true |

Multi-task dataset mixing | It seems like many of the best performing models on the GLUE benchmark make some use of multitask learning (simultaneous training on multiple tasks).

The [T5 paper](https://arxiv.org/pdf/1910.10683.pdf) highlights multiple ways of mixing the tasks together during finetuning:

- **Examples-proportional mixing** - sam... | https://github.com/huggingface/datasets/issues/217 | [

"I like this feature! I think the first question we should decide on is how to convert all datasets into the same format. In T5, the authors decided to format every dataset into a text-to-text format. If the dataset had \"multiple\" inputs like MNLI, the inputs were concatenated. So in MNLI the input:\r\n\r\n> - **... | null | 217 | false |

❓ How to get ROUGE-2 with the ROUGE metric ? | I'm trying to use ROUGE metric, but I don't know how to get the ROUGE-2 metric.

---

I compute scores with :

```python

import nlp

rouge = nlp.load_metric('rouge')

with open("pred.txt") as p, open("ref.txt") as g:

for lp, lg in zip(p, g):

rouge.add([lp], [lg])

score = rouge.compute()

```

... | https://github.com/huggingface/datasets/issues/216 | [

"ROUGE-1 and ROUGE-L shouldn't return the same thing. This is weird",

"For the rouge2 metric you can do\r\n\r\n```python\r\nrouge = nlp.load_metric('rouge')\r\nwith open(\"pred.txt\") as p, open(\"ref.txt\") as g:\r\n for lp, lg in zip(p, g):\r\n rouge.add(lp, lg)\r\nscore = rouge.compute(rouge_types=[\... | null | 216 | false |

NonMatchingSplitsSizesError when loading blog_authorship_corpus | Getting this error when i run `nlp.load_dataset('blog_authorship_corpus')`.

```

raise NonMatchingSplitsSizesError(str(bad_splits))

nlp.utils.info_utils.NonMatchingSplitsSizesError: [{'expected': SplitInfo(name='train',

num_bytes=610252351, num_examples=532812, dataset_name='blog_authorship_corpus'),

'recorded... | https://github.com/huggingface/datasets/issues/215 | [

"I just ran it on colab and got this\r\n```\r\n[{'expected': SplitInfo(name='train', num_bytes=610252351, num_examples=532812,\r\ndataset_name='blog_authorship_corpus'), 'recorded': SplitInfo(name='train',\r\nnum_bytes=611607465, num_examples=533285, dataset_name='blog_authorship_corpus')},\r\n{'expected': SplitInf... | null | 215 | false |

[arrow_dataset.py] add new filter function | The `.map()` function is super useful, but can IMO a bit tedious when filtering certain examples.

I think, filtering out examples is also a very common operation people would like to perform on datasets.

This PR is a proposal to add a `.filter()` function in the same spirit than the `.map()` function.

Here is a ... | https://github.com/huggingface/datasets/pull/214 | [

"I agree that a `.filter` method would be VERY useful and appreciated. I'm not a big fan of using `flatten_nested` as it completely breaks down the structure of the example and it may create bugs. Right now I think it may not work for nested structures. Maybe there's a simpler way that we've not figured out yet.",

... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/214",

"html_url": "https://github.com/huggingface/datasets/pull/214",

"diff_url": "https://github.com/huggingface/datasets/pull/214.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/214.patch",

"merged_at": "2020-05-29T11:32:20"... | 214 | true |

better message if missing beam options | WDYT @yjernite ?

For example:

```python

dataset = nlp.load_dataset('wikipedia', '20200501.aa')

```

Raises:

```

MissingBeamOptions: Trying to generate a dataset using Apache Beam, yet no Beam Runner or PipelineOptions() has been provided in `load_dataset` or in the builder arguments. For big datasets it has to ru... | https://github.com/huggingface/datasets/pull/213 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/213",

"html_url": "https://github.com/huggingface/datasets/pull/213",

"diff_url": "https://github.com/huggingface/datasets/pull/213.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/213.patch",

"merged_at": "2020-05-29T09:51:16"... | 213 | true |

have 'add' and 'add_batch' for metrics | This should fix #116

Previously the `.add` method of metrics expected a batch of examples.

Now `.add` expects one prediction/reference and `.add_batch` expects a batch.

I think it is more coherent with the way the ArrowWriter works. | https://github.com/huggingface/datasets/pull/212 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/212",

"html_url": "https://github.com/huggingface/datasets/pull/212",

"diff_url": "https://github.com/huggingface/datasets/pull/212.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/212.patch",

"merged_at": "2020-05-29T10:41:04"... | 212 | true |

[Arrow writer, Trivia_qa] Could not convert TagMe with type str: converting to null type | Running the following code

```

import nlp

ds = nlp.load_dataset("trivia_qa", "rc", split="validation[:1%]") # this might take 2.3 min to download but it's cached afterwards...

ds.map(lambda x: x, load_from_cache_file=False)

```

triggers a `ArrowInvalid: Could not convert TagMe with type str: converting to n... | https://github.com/huggingface/datasets/issues/211 | [

"Here the full error trace:\r\n\r\n```\r\nArrowInvalid Traceback (most recent call last)\r\n<ipython-input-1-7aaf3f011358> in <module>\r\n 1 import nlp\r\n 2 ds = nlp.load_dataset(\"trivia_qa\", \"rc\", split=\"validation[:1%]\") # this might take 2.3 min to download but it's... | null | 211 | false |

fix xnli metric kwargs description | The text was wrong as noticed in #202 | https://github.com/huggingface/datasets/pull/210 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/210",

"html_url": "https://github.com/huggingface/datasets/pull/210",

"diff_url": "https://github.com/huggingface/datasets/pull/210.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/210.patch",

"merged_at": "2020-05-28T13:22:10"... | 210 | true |

Add a Google Drive exception for small files | I tried to use the ``nlp`` library to load personnal datasets. I mainly copy-paste the code for ``multi-news`` dataset because my files are stored on Google Drive.

One of my dataset is small (< 25Mo) so it can be verified by Drive without asking the authorization to the user. This makes the download starts directly... | https://github.com/huggingface/datasets/pull/209 | [

"Can you run the style formatting tools to pass the code quality test?\r\n\r\nYou can find all the details in CONTRIBUTING.md: https://github.com/huggingface/nlp/blob/master/CONTRIBUTING.md#how-to-contribute-to-nlp",

"Nice ! ",

"``make style`` done! Thanks for the approvals."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/209",

"html_url": "https://github.com/huggingface/datasets/pull/209",

"diff_url": "https://github.com/huggingface/datasets/pull/209.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/209.patch",

"merged_at": "2020-05-28T15:15:04"... | 209 | true |

[Dummy data] insert config name instead of config | Thanks @yjernite for letting me know. in the dummy data command the config name shuold be passed to the dataset builder and not the config itself.

Also, @lhoestq fixed small import bug introduced by beam command I think. | https://github.com/huggingface/datasets/pull/208 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/208",

"html_url": "https://github.com/huggingface/datasets/pull/208",

"diff_url": "https://github.com/huggingface/datasets/pull/208.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/208.patch",

"merged_at": "2020-05-28T12:48:00"... | 208 | true |

Remove test set from NLP viewer | While the new [NLP viewer](https://huggingface.co/nlp/viewer/) is a great tool, I think it would be best to outright remove the option of looking at the test sets. At the very least, a warning should be displayed to users before showing the test set. Newcomers to the field might not be aware of best practices, and smal... | https://github.com/huggingface/datasets/issues/207 | [

"~is the viewer also open source?~\r\n[is a streamlit app!](https://docs.streamlit.io/en/latest/getting_started.html)",

"Appears that [two thirds of those polled on Twitter](https://twitter.com/srush_nlp/status/1265734497632477185) are in favor of _some_ mechanism for averting eyeballs from the test data.",

"We... | null | 207 | false |

[Question] Combine 2 datasets which have the same columns | Hi,

I am using ``nlp`` to load personal datasets. I created summarization datasets in multi-languages based on wikinews. I have one dataset for english and one for german (french is getting to be ready as well). I want to keep these datasets independent because they need different pre-processing (add different task-... | https://github.com/huggingface/datasets/issues/206 | [

"We are thinking about ways to combine datasets for T5 in #217, feel free to share your thoughts about this.",

"Ok great! I will look at it. Thanks"

] | null | 206 | false |

Better arrow dataset iter | I tried to play around with `tf.data.Dataset.from_generator` and I found out that the `__iter__` that we have for `nlp.arrow_dataset.Dataset` ignores the format that has been set (torch or tensorflow).

With these changes I should be able to come up with a `tf.data.Dataset` that uses lazy loading, as asked in #193. | https://github.com/huggingface/datasets/pull/205 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/205",

"html_url": "https://github.com/huggingface/datasets/pull/205",

"diff_url": "https://github.com/huggingface/datasets/pull/205.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/205.patch",

"merged_at": "2020-05-27T16:39:56"... | 205 | true |

Add Dataflow support + Wikipedia + Wiki40b | # Add Dataflow support + Wikipedia + Wiki40b

## Support datasets processing with Apache Beam

Some datasets are too big to be processed on a single machine, for example: wikipedia, wiki40b, etc. Apache Beam allows to process datasets on many execution engines like Dataflow, Spark, Flink, etc.

To process such da... | https://github.com/huggingface/datasets/pull/204 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/204",

"html_url": "https://github.com/huggingface/datasets/pull/204",

"diff_url": "https://github.com/huggingface/datasets/pull/204.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/204.patch",

"merged_at": "2020-05-28T08:10:34"... | 204 | true |

Raise an error if no config name for datasets like glue | Some datasets like glue (see #130) and scientific_papers (see #197) have many configs.

For example for glue there are cola, sst2, mrpc etc.

Currently if a user does `load_dataset('glue')`, then Cola is loaded by default and it can be confusing. Instead, we should raise an error to let the user know that he has to p... | https://github.com/huggingface/datasets/pull/203 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/203",

"html_url": "https://github.com/huggingface/datasets/pull/203",

"diff_url": "https://github.com/huggingface/datasets/pull/203.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/203.patch",

"merged_at": "2020-05-27T16:40:38"... | 203 | true |

Mistaken `_KWARGS_DESCRIPTION` for XNLI metric | Hi!

The [`_KWARGS_DESCRIPTION`](https://github.com/huggingface/nlp/blob/7d0fa58641f3f462fb2861dcdd6ce7f0da3f6a56/metrics/xnli/xnli.py#L45) for the XNLI metric uses `Args` and `Returns` text from [BLEU](https://github.com/huggingface/nlp/blob/7d0fa58641f3f462fb2861dcdd6ce7f0da3f6a56/metrics/bleu/bleu.py#L58) metric:

... | https://github.com/huggingface/datasets/issues/202 | [

"Indeed, good catch ! thanks\r\nFixing it right now"

] | null | 202 | false |

Fix typo in README | https://github.com/huggingface/datasets/pull/201 | [

"Amazing, @LysandreJik!",

"Really did my best!"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/201",

"html_url": "https://github.com/huggingface/datasets/pull/201",

"diff_url": "https://github.com/huggingface/datasets/pull/201.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/201.patch",

"merged_at": "2020-05-26T23:00:56"... | 201 | true |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.